The Undoing Project by Michael Lewis

Review

An excellent biography of Behavioural Economics and its fathers. Before Daniel Kahneman and Amos Tversky, economics (and economist) has always assumed that human is mostly rational being. We used to believe that human will make the best decision based on available information at the time, and those decisions reproducible. Now we know that that is untrue. In the words of Dan Ariely, we are ‘predictably irrational’.

You would learn about the relationship of Danny and Amos, how their personality and work dynamic would result in one of the most prominent psychology and economic theory. Lewis interestingly wrote the journey, starting from their childhood to how the war in Israel affects their work together.

Lewis started the book with a story of his ‘Moneyball’ approach in NBA. I think this is the most significant weakness of the book because this chapter does not tie together with the rest. Once you started the next chapter, it felt like you are reading an entirely different book. It would have been better if he removed it.

I love the book and rate it a solid 4 out of 5 stars.

Summary

This book is a hard one to summarise because of its narrative and density. It reads like a joint biography but also contains a significant amount of distillable knowledge. I will try my best to summarise both.

Moneyball in the NBA

In NBA, scouts relied on their gut feeling when selecting new players for drafting. They were not aware of the many biases affecting that feeling, resulting in many bad decisions over the years.

Daryl Morey introduced the new way of analysing players using statistical data. He even avoided interviewing because he thinks the face to face meeting would affect the decision too much. This new approach worked well, but it is still not impervious to human bias. One of the most famous cases was Jeremy Lin, quoted from the book:

“He lit up our model,” said Morey. “Our model said take him with, like, the 15th pick in the draft.” The objective measurement of Jeremy Lin didn’t square with what the experts saw when they watched him play; a not terribly athletic Asian kid. Morey hadn’t completely trusted his model - and so had chickened out and not drafted Lin. A year after the Houston Rockets failed to draft Jeremy Lin, they began to measure the speed of a player’s first two steps: Jeremy Lin had the quickest first move of any player measured. He was explosive and was able to change direction far more quickly than most NBA players. “He’s incredibly athletic,” said Morey. “But the reality is that every person, including me, thought he was unathletic. And I can’t think of any reason for it other than he was Asian.”

The incident led to Morey improving his model even more and added more rules to his scouts. They can only make a comparison if the players are from a different racial background, so more comparing new black athlete with Michael Jordan or comparing Asian athlete with Yao Ming. Most of the time, the favourable comparison disappears.

Myth of Rational Actor, Biases and Heuristics

The belief that people mostly make the right decision given specific information after calculating the utility and value given the cost was prevalent in economics with the concept of Adam Smith’s invisible hand. It influences all sorts of government policy and decision making.

Danny and Amos did not originally set out just to disprove this, but the data steers them towards this conclusion. Along with their studies, they found that people use heuristics to make quick judgments instead of rational thinking. We will explore several examples of them:

1. Confirmation Bias

When a basketball scout likes a certain player, they will organise data to support their findings which is why the first impression is critical. The scouts usually made matters worse because they tend to favour players that resemble their younger selves, even though it is irrelevant to the analysis.

People do this all the time without realising. This bias gets exploited all the time in the political arena. Once a person is committed to a party or candidate, they will start to seek reasons to support their party and reasons to vilify the opponent. Over time, a great divide would polarise the nation further and further away. Two person with opposing viewpoint could look at an event and come to two widely different perception due to their bias.

2. Anchoring

People would anchor their future decision based on arbitrary priors. For example:

Two groups were asked to estimate the result of 8! (eight factorial). One of the group was presented 1x2x3x4x5x6x7x8 while the other got 8x7x6x5x4x3x2x1. The second group’s answer was reliably around five times larger than the firsts.

You could even anchor an answer to a completely random thing, like the last digits of a telephone number. People with telephone ending in 98 would give a much higher estimate compared to people with phone finishing with 06.

3. Present and Hindsight Bias

Present Bias says that people tend to undervalue the future with the present. People prefer cash today even though investing it will net more gains over the years.

The Present Bias is amplified by Hindsight Bias in which people tends to look at an unpredictable outcome and assume it was predictable all along. For example, suppose that the market just crashed last week. The people who bought a new car last month instead of investing the money would think to themselves that their hunch was right and they knew the crash was coming all along.

4. Availability Heuristics

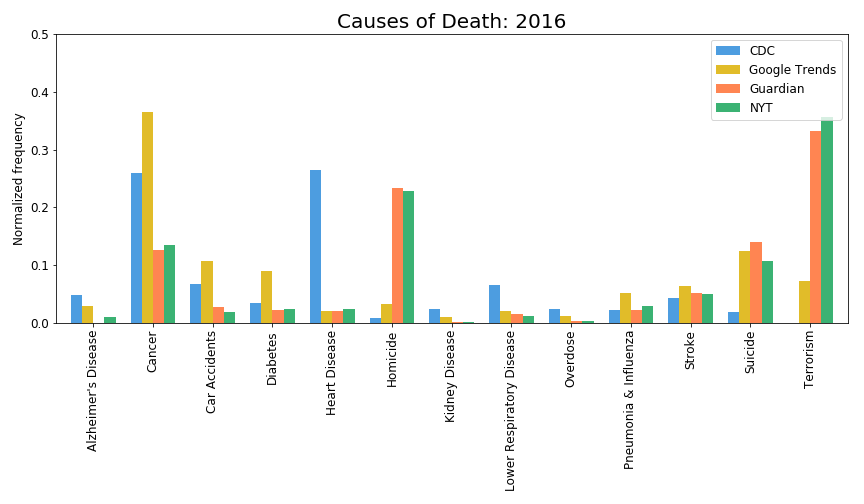

Availability Heuristics is the bias that made many people avoid the news nowadays. People would make the judgement error of assuming that because it is easy to recall the instance of something happening, the more likely is that thing being true.

For example, the news covers death by terrorism en masse, thus increasing its ‘availability’. People who watch the headlines would think terrorism is on the rise and causing massive damages, even though the actual number of death is minimal compared to under-reported heart disease deaths.

Owen Shen made a fantastic graphical representation of Death: Reality vs Reported in which he compares the amount of reporting compared to the actual deaths. You should read his article in its entirety.

image credit: Owen Shen

image credit: Owen Shen

These heuristics lead people to both ‘excessive fear and unjustified complacency’. Thus we have to be very careful with it.

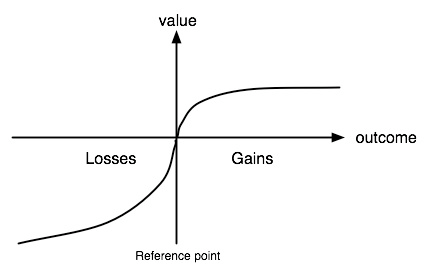

5. Loss Aversion, Prospect Theory and Endowment Effect

These three heuristics are tightly intertwined. Endowment Effect is when people value things that they have much more than things that they do not, just because they own them which leads to Loss Aversion, which says that losses hurt more than gains feel good. We can estimate the pain of losses or joy of gains with this graph:

image credit: wikipedia

image credit: wikipedia

They conducted a study with the following questions:

First situation:

You have $1,000, and you must pick one of the following choices:

Choice A: You have a 50% chance of gaining $1,000, and a 50% chance of winning $0.

Choice B: You have a 100% chance of winning $500.Second situation:

You have $2,000, and you must pick one of the following choices:

Choice A: You have a 50% chance of losing $1,000, and 50% of losing $0.

Choice B: You have a 100% chance of losing $500.

People would overwhelmingly pick B for the first situation and A for the second situation. This trend follows the asymmetrical value distribution of the Prospect Theory curve.

More Cognitive Biases

To learn more cognitive biases, please visit this Betterhuman article

Danny and Amos

Both were Israeli geniuses. Amos served as paratrooper while Danny escapes Nazi-occupied France to design a lot of the Israeli army psychology tests.

Amos was very confrontational while Danny avoids confrontation. Amos was an extrovert while Danny introvert. Amos was an outright optimist, while Danny was a pessimist.

Amos used to say, “when you are a pessimist, and the bad thing happens, you live it twice. Once when you worry about it, and the second time when it happens.” while Danny thinks that when you expect the worst, you are never disappointed.

They had very little in common besides having studied at the Hebrew University in Jerusalem together. However, they proved to be each other’s perfect match, until almost to the end.

Amos got the more prestigious job offers from Harvard and Stanford (which he took). Danny got a job at University of British Columbia, Vancouver, Canada which is of lower prestige compared to Amos. After their falling out, they begin to act like a divorced couple, trying to stay out of each other’s way and started to work with other people.

Daniel Kahneman won the Nobel prize in Economics for his works on human behaviours, which might have been shared with Amos Tversky had Amos not been taken away by cancer. Nobel prize does not get awarded posthumously. Kahneman wrote one of my favourite books of all time: “Thinking Fast and Slow” which explores a lot of the heuristics and biases that we talked about here but in much more detail.

Danny and Amos’s works arguably shaped a lot of current policies. Changing the default in retirement savings increases the saving rate accumulation in general population. People also start to pay attention to car accident rate when drivers were distracted on cellphones or drive too fast, resulting in law change to make road travel safer.